Automation is all the rage these days. Automation can thrash labor markets while simultaneously exploding productivity and profits. It will also make human jobs less boring (assuming we still have jobs). In this tutorial, we will learn how to use VBA to programmatically download files based on URLs. We might already know these URLs, or we may have to scrape them from the web and parse them. This is automation of a rather dull task, so implementing it hopefully has a positive impact on your work.

This tutorial is a little more involved than our recent tutorials. It contains multiple steps and pieces of code that are outside the general scope of a “downloading files with VBA” tutorial. Where it makes sense to explain, we’ll explain, but there are times when we’ll simply gloss over the technical parts and just use the code without much explanation. This guide lays out the practical requirements for downloading files from the internet using VBA. Once you finish the tutorial, you’ll be able to easily download URL files yourself.

With that said, let’s get started.

- Built-in Windows Function

- Downloading Our First File

- Downloading Multiple Files

- Scraping for URLs

- Ethical Questions

- Conclusion

The Built-in Windows Library Function

There are a number ways to download files using VBA, including using an XMLHTTP request to stream the binary data or text associated with your file, and then using the SaveToFile method to finish saving the downloaded file. This is probably the most common way to download files, but we’re not going to do it this way.

In this tutorial, we’re going to download files using a Windows function called URLDownloadToFile. The URLDownloadToFile function is part of the Windows library urlmon. Before we can use it, we need to declare the function and connect to it from VBA. We can do that by placing this line of code at the top of a VBA module:

Private Declare PtrSafe Function URLDownloadToFile Lib "urlmon" _

Alias "URLDownloadToFileA" (ByVal pCaller As Long, ByVal szURL As String, _

ByVal szFileName As String, ByVal dwReserved As Long, ByVal lpfnCB As Long) As LongMake powerful macros with our free VBA Developer Kit It’s easy to copy and paste a macro like this, but it’s harder make one on your own. To help you make macros like this, we built a free VBA Developer Kit and wrote the Big Book of Excel VBA Macros full of hundreds of pre-built macros to help you master file I/O, arrays, strings and more - grab your free copy below.

I know this is a rather long function declaration. Libraries are basically sets of prewritten code, usually optimized by the library developer (Microsoft, for this library). All the code needed to access a URL, download the stream of bits and bytes, then structure them back into a file on the hard drive is conveniently wrapped into this single function. All we have to do is throw this declaration at the top to begin using it.

We can see the return type is Long, which means the function will return a whole number. This makes checking whether the download started much easier. We’ll get to that soon, but for now, the most important parts are the

Downloading Our First File using VBA

Let’s say you find a picture you like and you want to download it. You could right-click then “Save Image as…”, or you could use VBA. Admittedly, using VBA would be inane for a single picture, but if you have hundreds of pictures to download, this task would be a perfect automation target. This tutorial focuses on downloading images, but the function is just as useful for downloading other file types.

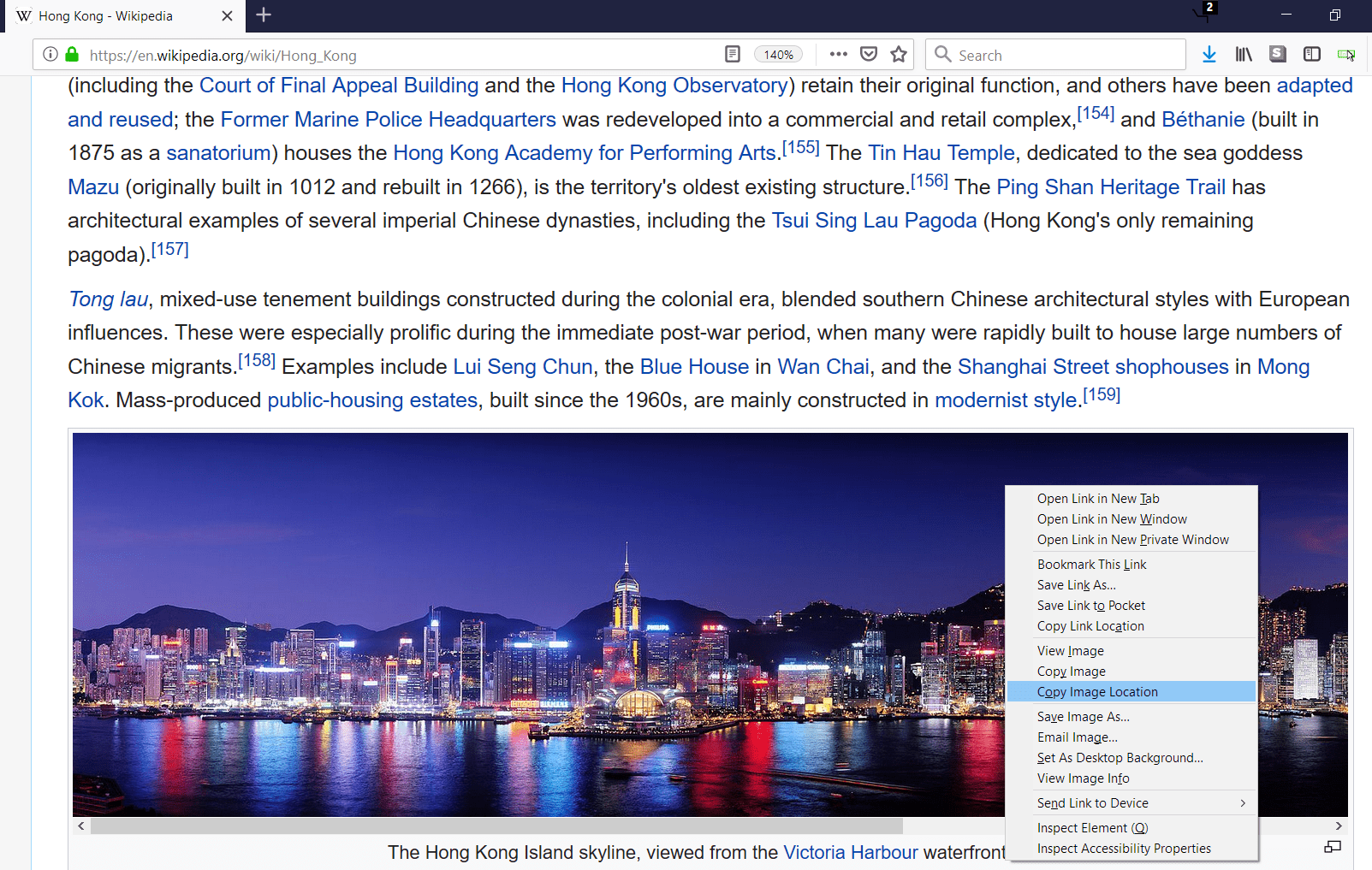

Anyway, we’re going to start with just one picture: a cityscape picture from Hong Kong’s Wikipedia page as of November 2018. In the HTML, every picture on every webpage is represented as a link to the picture’s very own URL, and that’s the URL we need to pass to the URLDownloadToFile function. You do not want to pass the URL of the webpage where the picture is embedded.

Under the architecture section on the Hong Kong Wikipedia page, there is a nice panorama of Hong Kong at night. We can access its URL by right-clicking and then clicking “Copy Image Location” or “Copy Image Address.” The words may change depending on your browser, but you get the idea.

Copy the URL of the image by clicking the "Copy Image Location" option.

You should have the following image path on your clipboard:

https://upload.wikimedia.org/wikipedia/commons/thumb/7/75/Hong_Kong_at_night.jpg/2400px-Hong_Kong_at_night.jpg. If you paste this path directly into a web browser’s URL box, you’ll get the image on its own page.

To download the picture with VBA, we can run a short subroutine, like this:

Private Declare PtrSafe Function URLDownloadToFile Lib "urlmon" _

Alias "URLDownloadToFileA" (ByVal pCaller As Long, ByVal szURL As String, _

ByVal szFileName As String, ByVal dwReserved As Long, ByVal lpfnCB As Long) As Long

Sub download_HK_picture()

imgsrc = "https://upload.wikimedia.org/wikipedia/commons/thumb/7/75/Hong_Kong_at_night.jpg/2400px-Hong_Kong_at_night.jpg"

dlpath = "C:\DownloadedPics\"

URLDownloadToFile 0, imgsrc, dlpath & "HK Skyline.jpg", 0, 0

End SubIf you have a folder named

If a folder with the path you specify doesn’t exist, your download will fail. You won’t get any error messages, but your file won’t be downloaded anywhere.

If the download folder doesn’t exist, don’t worry. You can make the folder using VBA before calling the URLDownloadToFile function. This approach is particularly useful if you frequently automate repetitive tasks. For example, you can timestamp the folder name when you run the program so you can go back through the folders later and know when you ran the macro.

Downloading Multiple Files

If you’re going to download multiple files, you likely already have a list of URLs. Lists are nice, because you can stick them in an array then iterate through your array with a For Loop. You just need to program a way to change the downloaded location’s filepath each time, otherwise you’ll overwrite the previous download with each new file. It’s also a smart idea to check for delays when downloading multiple files. We’ll explain why momentarily.

Designing with Uniqueness in Mind

If the list of files you want to download is small enough, you could manually label each URL and use the label as the filename. For example, you could have 2 columns in Excel: the first column will contain the file name and the second column will contain the URL you want to download, like this:

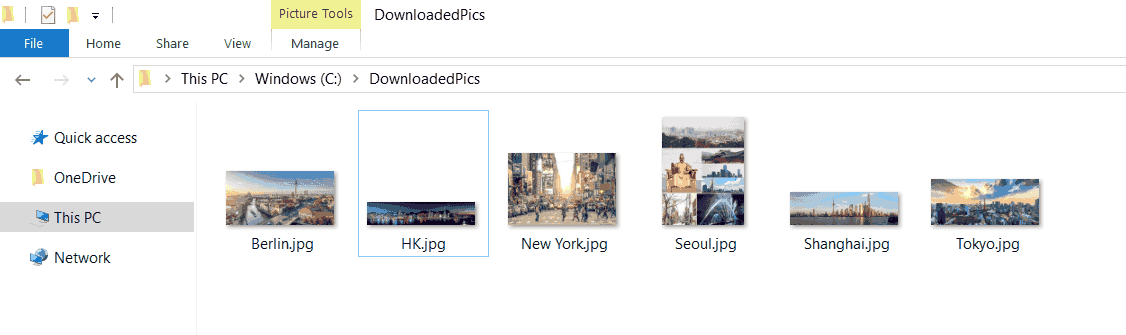

A list of cities (labels) and a URL with a photo of the respective city

Now we can iterate through this list, downloading each picture and assigning it a unique name.

Private Declare PtrSafe Function URLDownloadToFile Lib "urlmon" _

Alias "URLDownloadToFileA" (ByVal pCaller As Long, ByVal szURL As String, _

ByVal szFileName As String, ByVal dwReserved As Long, ByVal lpfnCB As Long) As Long

Sub download_multiple_photos()

dlpath = "C:\DownloadedPics\"

For i = 2 To 7

imgsrc = Cells(i, 2)

imgname = Cells(i, 1)

URLDownloadToFile 0, imgsrc, dlpath & imgname & ".jpg", 0, 0

Next i

End SubSince we know we only want to download

The destination folder with six downloaded pictures of cities

Timeout Code to Track Problems

If you download several hundred files per run, you will want to track which files are missing. An easy way to implement a timeout is to check the URLDownloadToFile return value and wait a couple seconds if it is not the desired result. If it hangs for more than the specified time, move to the next URL and mark that file’s download as failed.

A return value of zero (0) means the download has started successfully. Barring some connection interruption or memory failure downloading a gigantic file, it should finish. Thus, a simple yet effective way to track download problems is to check whether the result of the function is zero. If it isn’t, try it one more time before moving on. Other return values are out-of-memory or unreachable-source errors. If you want to be very meticulous, you could mark the type of failure in your program (hint: use an array).

For a more robust solution, you can use VBA to check whether or not the files exist before exiting your macro. If they don’t, you know the download failed or it’s still downloading.

Private Declare PtrSafe Function URLDownloadToFile Lib "urlmon" _

Alias "URLDownloadToFileA" (ByVal pCaller As Long, ByVal szURL As String, _

ByVal szFileName As String, ByVal dwReserved As Long, ByVal lpfnCB As Long) As Long

Sub timeout_for_bad_starts()

dlpath = "C:\DownloadedPics\"

For i = 2 To 7

imgsrc = Cells(i, 2)

imgname = Cells(i, 1)

result = URLDownloadToFile(0, imgsrc, dlpath & imgname & ".jpg", 0, 0)

If result <> 0 Then

Application.Wait (Now + TimeValue("00:00:03"))

result = URLDownloadToFile(0, imgsrc, dlpath & imgname & ".jpg", 0, 0)

End If

'if the result is still zero, mark the failure somehow and move on

Next i

End SubIn this example, we capture the returning long integer and check whether or not it’s a zero. If it’s not, we force the macro to try it again. If it fails a second time, we just give up and move on. We don’t implement a way to flag it as failed in this macro, but I recommend you store details about the failed download into an array before moving on.

Scraping for File Locations

If you want to grab every picture on a website, you can capture the URLs of the images via webscraping.

Since images will be tagged with img in the webpage’s HTML, you can use VBA’s GetElementsByTagName function to grab all the image URLs. You can easily adapt our VBA webscraping tutorial to grab the img tag. You will then need to access the src property of each Item, which will contain the URL of the image file. If the src property is not available for some reason, you might be able to use the href property. At least one of these two properties will be present for all images.

The modification from the webscraping tutorial would follow logic like this:

'text = table_data.Item(i).Children(j).innertext 'Comment the line similar to this and add the next line instead

imgURL = table_data.Item(i).srcThe example we used for webscraping used the Wikipedia page on country and dependency populations, which has a nice table of countries with ranks, populations, and little flags. To practice, try to scrape the table, extract the country name, scrape the associated flag’s image URL, download the flag picture file, and use the extracted country name to name the file. Paste your solution in the comments section!

If you aren’t so ambitious, you could use something like imgname = i & downloadPath, where i is the for-loop control variable. This method would match the flag with the country’s population rank, so there is some practicality to doing it this simpler way.

If you’re lucky, the photos on the website might have a title property, and you could use that for naming, too.

Ethical Questions

You can automate the download of hundreds or thousands of files rather easily using this method, especially when coupled with a webscraper that’s simply looking for a tag, like the img tag, to grab any and all files on a website.

There are three ethical issues here.

First, you’ll be requesting server time from someone or some company, and automating this process might put strain on their infrastructure. In the extrinsic case, you might get your IP banned from accessing the server as a punishment, but even if you don’t, there’s the ethical question of using up resources others are providing for free and fair use. For huge sites, like Google or Amazon, they probably won’t notice at all. But smaller companies, especially those running their own servers, might be affected.

The second ethical question revolves around hotlinking and revenue theft. Basically, if you already know the image URL and don’t need to visit the main webpage (such as if you found the image through Google’s Image Search and just grabbed the URL), you’ll be depriving the host of any advertising revenue. On one hand, you won’t be taking up server resources to load the main page, but you also won’t be rewarding the host with any advertising revenue or traffic in exchange for their information. This could harm their search engine rankings.

While this kind of automation probably doesn’t carry ethical questions of eliminating someone’s job, it does carry some ethical implications. You should think about such ethical considerations before automating any task.

The third ethical question, and the one that plagued me the most before posting this tutorial, is that someone with criminal intent could use the function for more sinister macros. For example, someone could write a macro to automatically download viruses or other nefarious file types to someone’s machine. I’m posting this tutorial because many people would benefit from being able to automatically download files using VBA. Don’t be a jerk and abuse the knowledge you’ve learned as a VBA developer.

Conclusion

In this tutorial, you learned how to use VBA to download files. We used images in our examples, but you can download any file type. The entire automation process can be quite long and may require a lot of research, but this tutorial will get you on your way. Automation can help reduce tedious work, but always remember to consider the ethical implications that arise from automating work, from job elimination to revenue theft.

I hope you found this helpful. When you’re ready to take your VBA to the next level, remember to subscribe using the form below.